- U.S. Department of Energy’s (DOE) Argonne National Laboratory is using deep learning system to monitor birds at solar facilities.

- The three-year project will combine computer vision techniques with a form of artificial intelligence (AI).

- It'll monitor solar sites for birds and collect data on what happens when they fly by, perch on or collide with solar panels.

As more solar energy systems are installed across the United States, scientists are quantifying the effects on wildlife. Current data collection methods are time-consuming, but the U.S. Department of Energy’s (DOE) Argonne National Laboratory has proposed a solution. The lab has been awarded $1.3 million from DOE’s Solar Energy Technologies Office to develop technology that can cost-effectively monitor avian interactions with solar infrastructure.

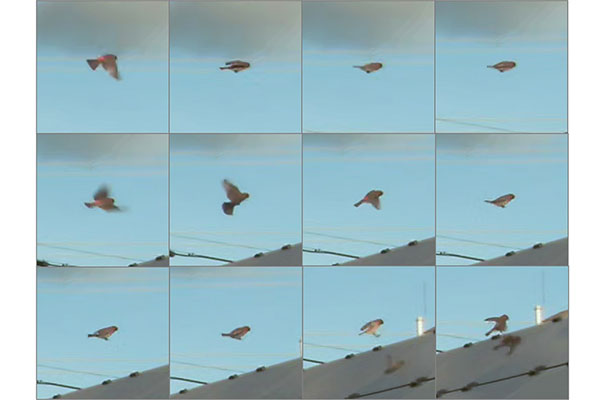

The three-year project, which began this spring, will combine computer vision techniques with a form of artificial intelligence (AI) to monitor solar sites for birds and collect data on what happens when they fly by, perch on or collide with solar panels.

“There is speculation about how solar energy infrastructure affects bird populations, but we need more data to scientifically understand what is happening,” said Yuki Hamada, a remote-sensing scientist at Argonne, who is leading the project.

An Argonne study published in 2016 estimated, based on the limited data available, that collisions with photovoltaic panels at U.S. utility-scale solar facilities kill between 37,800 and 138,600 birds per year. While that’s a low number compared with building and vehicle strikes, which fell hundreds of millions of birds annually, learning more about how and when those deaths occur could help prevent them.

“The fieldwork necessary to collect all this information is very time- and labor-intensive, requiring people to walk the facilities and search for bird carcasses,” said Leroy Walston, an Argonne ecologist, who led the study. “As a result, it’s quite costly.”

Such methods are also limited in frequency and span, and they offer little insight about live bird behaviors around solar panels.

The new project aims to reduce the frequency of human surveillance by using cameras and computer models that can collect more and better data at a lower cost. Achieving that involves three tasks: detecting moving objects near solar panels; identifying which of those objects are birds; and classifying events (such as perching, flying through or colliding). Scientists will also build models using deep learning, an AI method that creates models inspired by a human brain’s neural network, making it possible to “teach” computers how to spot birds and behaviors by training them on similar examples.

In an earlier Argonne project, researchers trained computers to distinguish drones flying in the sky overhead. The avian-solar interaction project will build on this capability, bringing in new complexities, noted Adam Szymanski, an Argonne software engineer, who developed the drone-detection model. The cameras at solar facilities will be angled toward panels rather than pointed upward, so there will be more complex backgrounds. For example, the system will need to tell the difference between birds and other moving objects in the field of view, such as clouds, insects or people.

Initially, the researchers will set up cameras at one or two solar energy sites, recording and analyzing video. Hours of video will need to be processed and classified by hand in order to train the computer model.

Because collisions are relatively rare, Hamada said they could be simulated using an object, such as a toy bird, so that the system has initial information to use as training examples.

“Model training requires a significant amount of computing power,” Szymanski said. “We’ll be able to use some of the larger computers here at Argonne’s Laboratory Computing Resource Center for that.”

Once the model is trained, it will run internally within the cameras on a live video feed, classifying interactions on the fly — another challenge that involves edge computing, where information is processed closer to where it is collected.

“We won’t have the luxury of recording a lot of video, sending it back to the lab and analyzing it later,” Szymanski added. “We have to design the model to be more efficient, so it can be executed in real-time at the edge.”

This technology development for tackling the real-world challenge may be advanced in the future by leveraging the Sage Cyberinfrastructure initiative, led by Northwestern University, and Argonne’s Waggle sensor system to provide a faster and more powerful edge computation platform and multidisciplinary software stack.

The Argonne project was selected as a part of the Solar Energy Technologies Office Fiscal Year 2019 funding program, which includes funding to develop data collection methods to assess the impacts of solar infrastructure on birds. A better understanding of avian-solar interactions can potentially reduce the siting, permitting and wildlife mitigation costs for solar energy facilities.

The automated avian monitoring technology is being developed in collaboration with Boulder AI, a company with a depth of experience producing AI-driven cameras and the algorithms that run on them. Several solar energy facilities also support the project by providing permission to collect video and evaluate the technology onsite.

To assure sound technology development, the team will also have at its disposal a technical advisory committee comprised of machine-learning experts from Northwestern University and the University of Chicago, as well as solar technology and avian ecology experts from the Cornell Lab of Ornithology, conservation groups, the solar industry and governmental agencies.

At the end of the project, Argonne will have developed a camera system that can detect, monitor and report bird activities around solar facilities. The system will also notify solar facility staff when collisions happen. The technology will then be ready for large-scale field trials at many solar facilities, said Hamada.

The resulting data could be used to detect patterns and begin answering key questions: Are certain types of birds more prone to strikes? Do collisions increase at certain times of the day or year? Does geographic location of the solar panels play a role in the types of interactions? Do solar energy facilities provide viable habitat for birds?

The technological framework can also be used to monitor other wildlife by retraining AI with appropriate data.

“Once patterns are identified, that knowledge can be used to design mitigation plans,” Hamada said. “Down the road, once a mitigation strategy is in place, the same system can be used to evaluate the strategy’s effectiveness.”

Comments